Not An Allergen … But Be Cautious

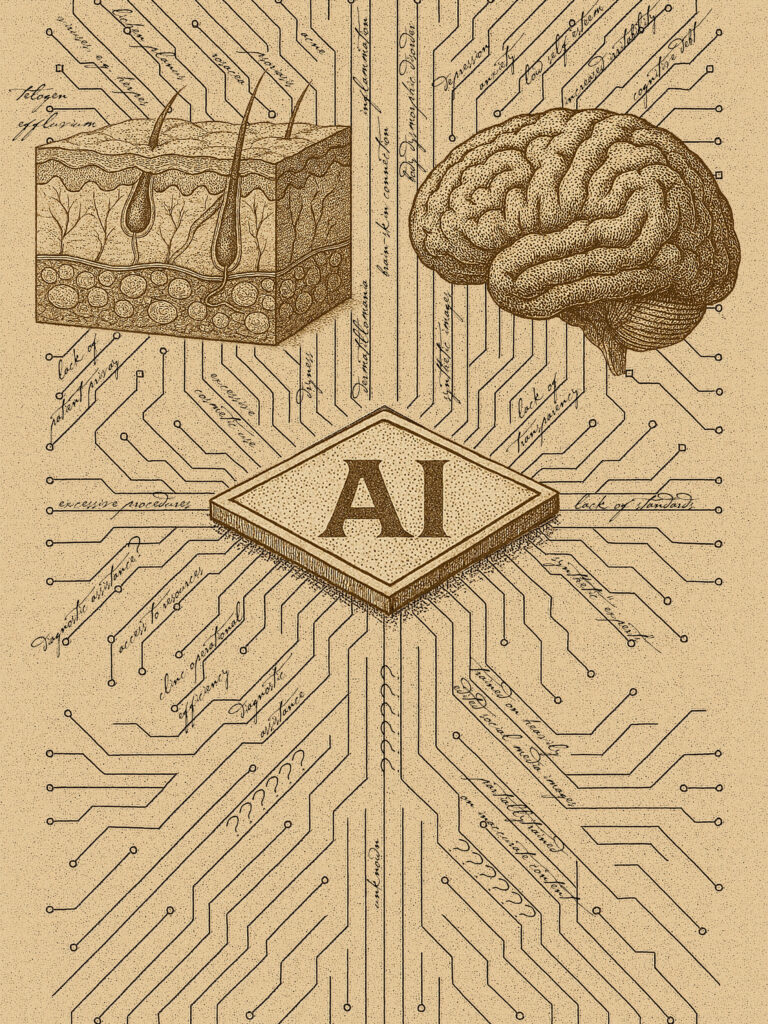

AI (Artificial Intelligence)

We’re not likely to ever see AI in a patch test. It doesn’t come into contact with skin, after all. And there are lots of new developments in AI that show promise in areas as varied as skincare formulation development, clinic operational efficiency, and diagnostics (especially in parts of the world where access to dermatological care is difficult). In fact, the World Health Organization has a Global Initiative on Artificial Intelligence for Skin Conditions to help monitor skin-related neglected tropical diseases and other skin concerns in areas with scarce resources.

So why the caution?

It is very early days but there are already several concerns, including: absence of consistent validations and regulatory oversight (such as not having FDA approval); lack of transparency with regards to scientific sources or legitimacy; the potential to mislead patients; inaccurate diagnoses; patient privacy; entirely synthetic “experts”; depression; and cognitive health.

Lack of evidence and regulatory oversight; inaccurate diagnoses

One review of available dermatological apps using AI concludes that, overall, “these apps lack supporting evidence, input from clinicians and/or dermatologists, and transparency in algorithm development, data usage, and user privacy.” From a summary of the review:

The researchers noted that 10 apps (24.4%) claimed diagnostic capability. However, they explained that none of these had scientific publications as supporting evidence, and 2 apps lacked warnings cautioning patients about the potential inaccuracy of results and the absence of formal medical diagnoses. Also, only 5 apps had supporting peer-reviewed journal publications, and 1 had a preprint article available.

Conversely, 24 apps (58.5%) lacked any information on training and testing datasets. For those with dataset information, the majority offered only vague, general descriptions. Additionally, 21 apps (51.2%) lacked information on algorithm details, and most apps did not specify any clinician input. More specifically, only 16 apps (39.0%) identified that they included dermatologist input.

There are other risks that could lead to inaccurate diagnoses, as the HIPAA Journal shares, including AI “confabulations,” AI unpredictability when dealing with unusual cases, or human over-reliance on AI leading to a decline in verification.

“AI systems also carry operational and clinical risks due to confabulations. Confabulations occur when an AI tool combines unrelated or partially related data elements into a single, inaccurate output. These errors can lead to incorrect summaries, misaligned recommendations, or misleading documentation if they are relied on without verification. AI tools may also behave unpredictably when encountering unusual inputs, edge cases, or ambiguous information …

… Other risks include data leakage, model drift, and over‑reliance on automation. For example, if an AI model is trained on outdated data, its outputs may become less accurate over time. Similarly, workforce members may assume that AI‑generated content is always correct, leading to reduced vigilance and missed errors.

This Time magazine article emphasizes that AI models can hallucinate: “multiple studies suggest that if there’s missing information in your medical records, models are more likely to hallucinate, or produce incorrect or misleading results.”

Another review, this time for AI for total-body photography, points out that an inaccurate or incomplete diagnosis could come from AI not being able to provide a “real-world clinical approach where skin phenotype and clinical background information are considered.”

Phenotype also refers to observable characteristics of a patient such as appearance, behavior, etc. … all of which are important factors that go into a physician’s diagnosis and suggestions for care. Even with more data extracted from a patient such as lifestyle, diet, etc. an app cannot observe if a patient looks withdrawn, lethargic, confused or agitated, for example, or if they exhibit spasms or difficulty with certain movements, or if they have habits like touching parts of their face. Such observations could lead a physician to ask more or different questions, possibly leading to additional instructions, tests, referrals, or diagnoses.

It is further important to note that accuracy might not be the tech’s priority, as the Time article points out: “models are designed to prioritize being helpful over medical accuracy—and to always supply an answer, especially one that the user is likely to respond to.”

That AI prioritizes engagement over accuracy, safety, or health could perhaps partly explain reports of the tech encouraging users to take violent action or harm themselves, as seen in this MIT Technology Review. Other articles have reported on AI sending threatening messages or sharing dangerous health tips.

The impact of such limitations is not insignificant. Many of these apps purport to offer mole mapping (important for skin cancer prevention and early detection) as well as actually diagnosing skin cancer and other skin and hair conditions. Other apps offer treatment or monitoring of skin conditions that require proper medical management such as atopic dermatitis and acne.

The British Association of Dermatologists issued a warning regarding the potentially misleading claims of skin cancer apps to provide an accurate diagnosis and published a position paper to help define standards for dermatological AI tools. They also created a set of guidelines for patients to spot potential red flags in dermatological AI apps. Such guidelines are helpful but without regulatory oversight governing these apps and what they promise, consumers are at risk of being misled.

Patient privacy

Making your personal information more accessible can be helpful from a clinical management perspective but it does have its risks, including less control over who sees your images, preferences, medical history, and diagnoses. This is especially true with AI apps because many of them are unregulated and either do not disclose or are unclear about how your data is stored, used, or shared.

Generative AI learns from accessing huge datasets — this can include information that you share about yourself. Even small amounts of information shared by you in various ways (your personal Claude or Chat GPT conversations, for example, the data that your health tracking device monitors, what you post on social media, etc.) could potentially be used to identify you, as this study on healthcare data privacy in the AI era notes: “Many applications of AI in healthcare involve the consumption of protected health information as well as unprotected data generated by the users themselves, such as health trackers on smart devices, Internet search history and inferences from shopping patterns, or by entities not covered by protective laws such as Health Insurance Portability and Accountability Act (HIPAA) … such data can be re-identified through triangulation with these other identifiable data sets. This is especially true in cases of AI backed by information technology behemoths like Google, Apple, and Meta.”

Besides your information being scoured by generative AI systems training themselves, there’s also the risk of your data being breached simply from technical failures like data leaks. The same study emphasizes that “the data used for AI applications usually has to be uploaded to one or more cloud servers or Graphics Processing Units (GPUs), which adds another level in data processing where potential data compromise can occur.”

When handled securely (such as anonymizing patient information), such huge datasets can be very important in research. During the COVID-19 pandemic, for example, this allowed for rapid learning and sharing to help guide care and safety protocols around the world. But individual apps that make use of AI for healthcare may or may not keep your information confidential. From the Time article on giving AI your medical information:

“No federal regulatory body governs the health information provided to AI chatbots, and ChatGPT provides technology services that are not within the scope of HIPAA. “It’s a contractual agreement between the individual and OpenAI at that point,” says Bradley Malin, a professor of biomedical informatics at Vanderbilt University Medical Center …

“When you go to your health care provider and you have an interaction with them, there’s a professional agreement that they’re going to maintain this information in a confidential manner, but that’s not the case here,” Malin says. “You don’t know exactly what they are going to do with your data. They say that they’re going to protect it, but what exactly does that mean?”

Entirely synthetic AI-generated images, influencers, and “experts”

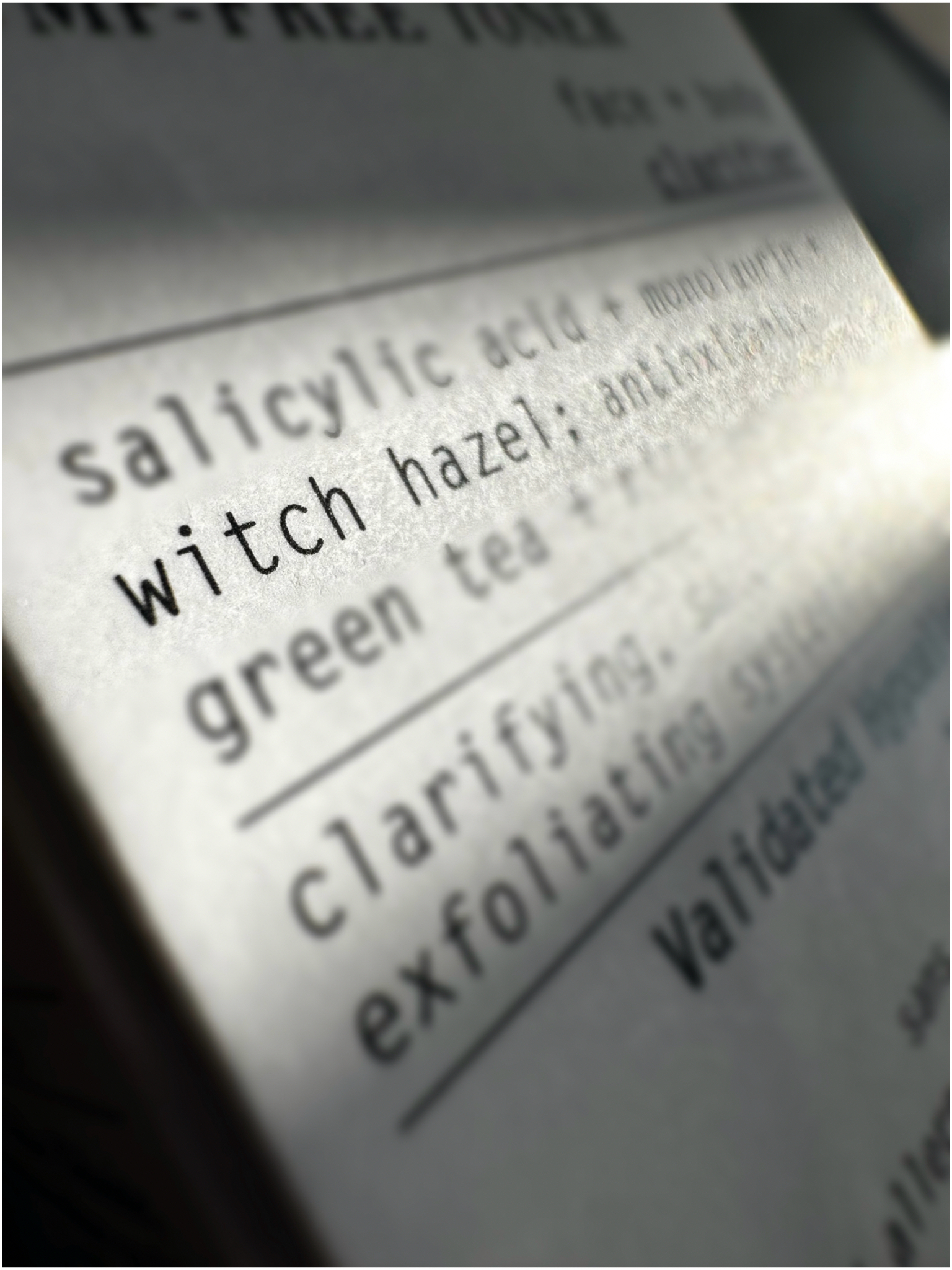

AI skincare apps can tap into tremendous amounts of datasets to make personalized recommendations. This can be helpful to individuals looking for customized product or regimen suggestions. But biases in AI models (most are trained on datasets that lack diversity) and privacy concerns remain. This review adds another: “the human touch remains essential, particularly in accounting for personal preferences and subjective skincare experiences.”

Customers might not realize that the expert they’re speaking to doesn’t exist. AI chatbots are already replacing real humans as customer service representatives. Depending on how they are programmed or trained (which is not usually disclosed), these chatbots may miss medically-relevant information or give wrong advice — remember that these apps are not regulated, do not often have physician input and oversight, and currently do not have minimum scientific standards that they are required to meet. Without proper disclaimers, customers and patients may believe they are chatting with a skincare expert or health professional.

A creative agency in the UK points out additional potential risks with AI in the beauty industry, among them fake reviews of skincare and beauty products (which could mislead customers), completely AI-generated skincare influencers (which could be presenting themselves as legitimate experts and could be sharing inaccurate or dangerous health advice), and the increasing use of entirely AI-generated inspirational or “actual” before-and-after images to promote products or procedures (being entirely synthetic, these images can easily exaggerate or falsify promised results).

It is important to remember that AI uses publicly available information, including social media content, to train itself. Health-related social media content is not regulated and studies confirm that the majority of dermatological information in social media is not posted by board-certified dermatologists.

Further troubling is that, especially among younger people, an influencer’s number of followers often trumps legitimate qualifications. And qualified physicians may be unable to create a strong social media presence for several reasons, including: their country’s medical organizations having strict regulations controlling the social media presence of boarded physicians, the algorithm prioritizing controversial content that elicits strong emotions over facts, or the demands of their clinical and research work preventing them from devoting much time to growing a social media presence. In other words, an impactful social media presence may be possible for professional content creators and not for qualified medical professionals.

It is worth emphasizing that algorithms push what is more likely to get attention, which tends to be highly controversial or inaccurate content. As this study emphasizes: “The proliferation of dermatologic content on social media poses challenges to information integrity, with media algorithms potentially rapidly amplifying unverified claims.” The same study shares results of analyses across several other studies:

YouTube videos on allergic contact dermatitis often contain poor-quality medical information; 60% of psoriasis patients on Facebook reported exposure to misleading content, and 57% encountered covert promotional material related to their skin condition.

AI apps could easily be repeating “expert advice” from extremely popular but dubious or unqualified sources. Or, as some dermatologists do share content but do not disclose ties with commercial brands, AI apps could be repeating what is, in essence, promotional content disguised as legitimate health guidance.

AI use, depression, and skin

Depression, stress, and anxiety are damaging to skin, enough so that psychodermatology is a growing specialty. Check out this post for more about the important brain-skin connection (the “feedback mechanisms and crosstalk between the brain and the skin”), how mental health affects specific skin conditions and skin health overall, as well as some helpful tips.

Recent lawsuits have found social media platforms guilty of purposefully designing their algorithms to be addictive, leading to harming young people’s mental health. Studies show that the exaggerated images rampant on social media (part of what these algorithms push) can lead to unrealistic expectations for skincare and contribute to low self esteem, an obsession with imagined skin and body defects, body dysmorphic disorder, and excessive use of cosmetics and cosmetic procedures. This study shares information specific to skin concerns:

Social media use among adolescents with acne or atopic dermatitis can contribute to unrealistic appearance expectations and exacerbate mental health conditions, including body dysmorphic disorder, anxiety, and depression. Photo filters and editing tools can distort perceived standards of appearance, potentially worsening the psychological burden for those with visible skin conditions. This is particularly concerning as adolescents with such conditions are already vulnerable to emotional distress and social impairment.

Again, generative AI uses publicly available social media content to train itself and social media images are heavily filtered or otherwise edited. It is possible that entirely AI-generated images of skin health and beauty would, at least in part, be based on unrealistic images from social media that have been proven to lead to unhealthy self image issues.

While widespread AI use is so new, there are initial studies that indicate that it may contribute to mental health issues. One such study based on a survey of over 20,000 users showed that “AI use was significantly associated with greater depressive symptoms,” especially among younger age groups. The study added that “similar patterns were observed for anxiety and irritability” and that the highest estimates were among people who used AI for personal use. Note that this is just one study and that it does have limitations. More studies are necessary to better understand the correlation (if there is one) between AI use and mental health.

Still, there are a growing number of studies on the impact of social media on mental health, enough so that New York City has classified social media as a public health threat and several countries have followed Australia’s lead in either banning or more strictly controlling social media access (especially among children and young teens). Generative AI is being trained on publicly available social media posts — including heavily filtered and edited images and inaccurate skin and health content. It is not too much of a stretch to believe that AI images and advice may be based on such unrealistic or inaccurate skin and health content.

Cognitive health and our ability to judge health advice and options

It is unclear how (if at all) extensive AI use could impact our cognitive abilities such as critical thinking. A very early study out of MIT showed that “ChatGPT users (in the study) had the lowest brain engagement and ‘consistently underperformed at neural, linguistic, and behavioral levels.'” In this article reporting on the study, a psychiatrist who specializes in kids and teens adds: “From a psychiatric standpoint, I see that overreliance on these LLMs (Large Language Models) can have unintended psychological and cognitive consequences, especially for young people whose brains are still developing … These neural connections that help you in accessing information, the memory of facts, and the ability to be resilient: all that is going to weaken.”

How might this cognitive weakening (if it does occur) impact us in terms of how we judge which medical sources are legitimate, whom to trust with our dermatological care, and how to choose products and procedures? It is far too early to know or even guess. But recent reports of studies are showing that gen Z “is the first generation less cognitively capable than their parents” due, in part, to screen learning. It is interesting to view this in light of other studies that seem to show that note taking by hand and reading physical books seem to result in higher cognitive scores.

Non-screen learning, reading, and work could be better for our cognitive health. This is helpful as we navigate the massive amount of information available to and forced upon us on a daily basis (even more so as AI increases in use), towards making wise decisions about our dermatological and overall health care.

Trust your dermatologist

We would suggest not (yet) trusting AI with your diagnosis, skin care, or the privacy of your information or images. We would also caution against heavy use of AI in case it could impact your mental health, which could, in turn, impact your skin health.

Trust your properly-boarded dermatologist.

- They have medical credentials that they had to earn and have to renew regularly.

- They continue studying (and from legitimate, peer-reviewed sources held to rigorous standards and transparent checks and balances that constantly validate results).

- Many dermatologists also research and/or teach, contributing to the science itself.

- Your dermatologist is required to keep your information and images confidential.

- They can help guide you towards realistic goals.

- They can help you judge the deluge of information and recommendations that you’re getting from social media and AI.

- They don’t just see your skin but can touch and feel to check for texture and temperature and other important physical indicators.

- Your dermatologist can observe other aspects of you that could provide clues to your overall health.

And they care for all of you, including what AI cannot see or interpret (the human interaction between you and your physician can be healing in its own right).

Subscribe to VMVinSKIN.com and our YouTube channel for more hypoallergenic tips and helpful “skinformation”!

If you have a history of sensitive skin…

…don’t guess! Random trial and error can cause more damage. Ask your dermatologist about a patch test.

To shop our selection of hypoallergenic products, visit vmvhypoallergenics.com. Need help? Ask us in the comments section below, or for more privacy (such as when asking us to customize recommendations for you based on your patch test results) contact us by email, or drop us a private message on Facebook.

For more:

- On the prevalence of skin allergies, see Skin Allergies Are More Common Than Ever.

- For the difference between irritant and allergic reactions, see It’s Complicated: Allergic Versus Irritant Reaction.

- For the difference between food, skin, and other types of reactions: see Skin & Food Allergies Are Not The Same Thing.

- On the differences between hypoallergenic, natural, and organic, check out Is Natural Hypoallergenic? and this video in our YouTube channel.

- To learn about the VH-Rating System and hypoallergenicity: What Is The Validated Hypoallergenic Rating System?

Main References:

Regularly published reports on the most common allergens by the North American Contact Dermatitis Group and European Surveillance System on Contact Allergies (based on over 28,000 patch test results, combined), plus other studies. Remember, we are all individuals — just because an ingredient is not on the most common allergen lists does not mean you cannot be sensitive to it, or that it will not become an allergen. These references, being based on so many patch test results, are a good basis but it is always best to get a patch test yourself.

- Wongvibulsin S, Yan MJ, et al. Current State of Dermatology Mobile Applications With Artificial Intelligence Features. JAMA Dermatol. 2024;160(6):646–650. doi:10.1001/jamadermatol.2024.0468.

- Primiero CA, Rezze GG, et al. A Narrative Review: Opportunities and Challenges in Artificial Intelligence Skin Image Analyses Using Total Body Photography. J Invest Dermatol. 2024 Jun;144(6):1200-1207. doi: 10.1016/j.jid.2023.11.007. Epub 2024 Jan 16. PMID: 38231164.

- Doctors issue warning about dangerous AI-based diagnostic skin cancer apps. British Association of Dermatologists. June 23, 2022. Accessed April 8, 2026. https://www.bad.org.uk/doctors-issue-warning-about-dangerous-ai-based-skin-cancer-apps.

- Behara K, Bhero E, et al. AI in dermatology: a comprehensive review into skin cancer detection. PeerJ Comput Sci. 2024 Dec 5;10:e2530. doi: 10.7717/peerj-cs.2530. PMID: 39896358; PMCID: PMC11784784.

- Hash MG, Forsyth A, et al. Artificial Intelligence in the Evolution of Customized Skincare Regimens. Cureus. 2025 Apr 18;17(4):e82510. doi: 10.7759/cureus.82510. PMID: 40385841; PMCID: PMC12085869.

- Boyle, M. (2025). The Dark Side of AI in Health & Beauty: Ux Agency Northern Ireland, London, Birmingham. Retrieved from https://ideasandoutcomes.com/insights/the-dark-side-of-ai-in-health-beauty

- Laughter MR, Anderson JB, et al. Psychology of aesthetics: Beauty, social media, and body dysmorphic disorder. Clin Dermatol. 2023 Jan-Feb;41(1):28-32. doi: 10.1016/j.clindermatol.2023.03.002. Epub 2023 Mar 5. PMID: 36882132.

- Gill, R. (2021). Changing the perfect picture: Smartphones, social media and. Retrieved from https://www.citystgeorges.ac.uk/__data/assets/pdf_file/0005/597209/Parliament-Report-web.pdf

- How social media can harm your body image. (2023). Retrieved from https://health.clevelandclinic.org/social-media-and-body-image

- Thunga S, Khan M, et al. AI in Aesthetic/Cosmetic Dermatology: Current and Future. J Cosmet Dermatol. 2025 Jan;24(1):e16640. doi: 10.1111/jocd.16640. Epub 2024 Nov 7. PMID: 39509562; PMCID: PMC11743249.

- J. G. Labadie, S. Hogan, et al, “Social Media and the Dermatologist,” Journal of Drugs in Dermatology: JDD 23, no. 12 (December 2024): 1125.

- Linkov, PG, Vuckovic, M. “ Which is Better? An Academic Reputation or 100,000 Followers? Social Media’s Impact on Reputation.” in In: Advances in Cosmetic Surgery, eds. Branham, G. H. , Dover, J. S. et al, 2023. 6, 1st ed., 137–141.

- Nguyen M, Case S, et al. The use of social media platforms to discuss and educate the public on allergic contact dermatitis. Contact Dermatitis. 2022 Mar;86(3):196-203. doi: 10.1111/cod.14004. Epub 2021 Dec 12. PMID: 34741559.

- Ranpariya V, Chu B, et al. Dermatology without dermatologists? Analyzing Instagram influencers with dermatology-related hashtags. J Am Acad Dermatol. 2020 Dec;83(6):1840-1842. doi: 10.1016/j.jaad.2020.05.039. Epub 2020 May 13. PMID: 32416205.

- Rey, A.B. and Tan, J. (2025), Social Media in Dermatology and Skin Health: Challenges and Opportunities. JEADV Clinical Practice, 4: S59-S63. https://doi.org/10.1002/jvc2.70096

- Yadav N, Pandey S, et al. Data Privacy in Healthcare: In the Era of Artificial Intelligence. Indian Dermatol Online J. 2023 Oct 27;14(6):788-792. doi: 10.4103/idoj.idoj_543_23. PMID: 38099022; PMCID: PMC10718098.

- Steinhoff, M, Dréno, B, et al. “The Skin-Brain Dialogue: Advancing Psychodermatology Through Integrated Approaches,” JEADV Clin Pract 4, no. Suppl. 1 (2025)

- Rieder EA, Andriessen A, et al. Dermatology in Contemporary Times: Building Awareness of Social Media’s Association With Adolescent Skin Disease and Mental Health. J Drugs Dermatol. 2023 Aug 1;22(8):817-825. doi: 10.36849/jdd.7596. PMID: 37556525.

- Perlis RH, Gunning FM, et al. Generative AI Use and Depressive Symptoms Among US Adults. JAMA Netw Open. 2026;9(1):e2554820. doi:10.1001/jamanetworkopen.2025.54820

- Lițan D-E (2025) Mental health in the “era” of artificial intelligence: technostress and the perceived impact on anxiety and depressive disorders—an SEM analysis. Front. Psychol. 16:1600013. doi: 10.3389/fpsyg.2025.1600013

- Chen Y, Lyga J. Brain-skin connection: stress, inflammation and skin aging. Inflamm Allergy Drug Targets. 2014;13(3):177-90

- Kos’myna, N. (2025). Your brain on chatgpt: Accumulation of cognitive debt when using an AI assistant for Essay writing task. Retrieved from https://www.media.mit.edu/publications/your-brain-on-chatgpt/

- Al-Sharman A, Shalash RJ, et al. Exploring the impact of note taking methods on cognitive function among university students. BMC Med Educ. 2025 Aug 28;25(1):1218. doi: 10.1186/s12909-025-07593-x. PMID: 40877847; PMCID: PMC12392625.

- Perbal B. Neuroscience and psychological studies sustain the cognitive benefits of print reading. J Cell Commun Signal. 2017 Mar;11(1):1-4. doi: 10.1007/s12079-017-0379-5. Epub 2017 Feb 2. PMID: 28155112; PMCID: PMC5362581.

- Houle M-C, DeKoven JG, et al. North American Contact Dermatitis Group Patch Test Results: 2021–2022. Dermatitis®. 2025;36(5):464-476. doi:10.1089/derm.2024.0474

- Urban K, Giesey R, et al. A Guide to Informed Skincare: The Meaning of Clean, Natural, Organic, Vegan, and Cruelty-Free. J Drugs Dermatol. 2022 Sep 1;21(9):1012-1013.

- DeKoven JG, Warshaw EM, Reeder MJ, Atwater AR, Silverberg JI, Belsito DV, Sasseville D, Zug KA, Taylor JS, Pratt MD, Maibach HI, Fowler JF Jr, Adler BL, Houle MC, Mowad CM, Botto N, Yu J, Dunnick CA. North American Contact Dermatitis Group Patch Test Results: 2019-2020. Dermatitis. 2023 Mar-Apr;34(2):90-104. doi: 10.1089/derm.2022.29017.jdk. Epub 2023 Jan 19. PMID: 36917520.

- Uter W, Wilkinson SM, Aerts O, Bauer A, Borrego L, Brans R, Buhl T, Dickel H, Dugonik A, Filon FL, Garcìa PM, Giménez-Arnau A, Patruno C, Pesonen M, Pónyai G, Rustemeyer T, Schubert S, Schuttelaar MA, Simon D, Stingeni L, Valiukevičienė S, Weisshaar E, Werfel T, Gonçalo M; ESSCA and EBS ESCD working groups, and the GEIDAC. Patch test results with the European baseline series, 2019/20-Joint European results of the ESSCA and the EBS working groups of the ESCD, and the GEIDAC. Contact Dermatitis. 2022 Oct;87(4):343-355. doi: 10.1111/cod.14170. Epub 2022 Jun 24. PMID: 35678309. https://pubmed.ncbi.nlm.nih.gov/35678309/

- DeKoven JG, Silverberg JI, Warshaw EM, Atwater AR, et al. North American Contact Dermatitis Group Patch Test Results: 2017-2018. Dermatitis. 2021 Mar-Apr 01;32(2):111-123.

- DeKoven JG, Warshaw EM, Zug KA, et al. North American Contact Dermatitis Group Patch Test Results: 2015-2016. Dermatitis. 2018 Nov/Dec;29(6):297-309.

- DeKoven JG, Warshaw EM, Belsito DV, et al. North American Contact Dermatitis Group Patch Test Results 2013-2014. Dermatitis. 2017 Jan/Feb;28(1):33-46.

- Warshaw, E.M., Maibach, H.I., Taylor, J.S., et al. North American contact dermatitis group patch test results: 2011-2012. Dermatitis. 2015; 26: 49-59.

- W Uter et al. The European Baseline Series in 10 European Countries, 2005/2006–Results of the European Surveillance System on Contact Allergies (ESSCA). Contact Dermatitis 61 (1), 31-38.7 2009.

- Wetter, DA et al. Results of patch testing to personal care product allergens in a standard series and a supplemental cosmetic series: An analysis of 945 patients from the Mayo Clinic Contact Dermatitis Group, 2000-2007. J Am Acad Dermatol. 2010 Nov;63(5):789-98.

- Warshaw EM, Buonomo M, DeKoven JG, et al. Importance of Supplemental Patch Testing Beyond a Screening Series for Patients With Dermatitis: The North American Contact Dermatitis Group Experience. JAMA Dermatol. 2021 Dec 1;157(12):1456-1465.

- Verallo-Rowell VM. The validated hypoallergenic cosmetics rating system: its 30-year evolution and effect on the prevalence of cosmetic reactions. Dermatitis 2011 Apr; 22(2):80-97.

- Ruby Pawankar et al. World Health Organization. White Book on Allergy 2011-2012 Executive Summary.

- Misery L et al. Sensitive skin in the American population: prevalence, clinical data, and role of the dermatologist. Int J Dermatol. 2011 Aug;50(8):961-7.

- Warshaw EM1, Maibach HI, Taylor JS, Sasseville D, DeKoven JG, Zirwas MJ, Fransway AF, Mathias CG, Zug KA, DeLeo VA, Fowler JF Jr, Marks JG, Pratt MD, Storrs FJ, Belsito DV. North American contact dermatitis group patch test results: 2011-2012.Dermatitis. 2015 Jan-Feb;26(1):49-59.

- Warshaw, E et al. Allergic patch test reactions associated with cosmetics: Retrospective analysis of cross-sectional data from the North American Contact Dermatitis Group, 2001-2004. J AmAcadDermatol 2009;60:23-38.

- Marks JG, Belsito DV, DeLeo VA, et al. North American Contact Dermatitis Group patch-test results, 1998 to 2000. Am J Contact Dermat. 2003;14(2):59-62.

- Warshaw EM, Belsito DV, Taylor JS, et al. North American Contact Dermatitis Group patch test results: 2009 to 2010. Dermatitis. 2013;24(2):50-99.

- Verallo-Rowell V. M, Katalbas S.S. & Pangasinan J. P. Natural (Mineral, Vegetable, Coconut, Essential) Oils and Contact Dermatitis. Curr Allergy Asthma Rep 16,51 (2016) . https://doi.org/10.1007/s11882-016-0630-9.

- de Groot AC. Monographs in Contact Allergy, Volume II – Fragrances and Essential Oils. Boca Raton, FL: CRC Press Taylor & Francis Group; 2019.

- De Groot AC. Monographs in Contact Allergy Volume I. Non-Fragrance Allergens in Cosmetics (Part I and Part 2). Boca Raton, Fl, USA: CRC Press Taylor and Francis Group, 2018.

- Zhu TH, Suresh R, Warshaw E, et al. The Medical Necessity of Comprehensive Patch Testing. Dermatitis. 2018 May/Jun;29(3):107-111.

Want more great information on contact dermatitis? Check out the American Contact Dermatitis Society, Dermnet New Zealand, the Contact Dermatitis Institute, and your country’s contact dermatitis association.

Laura is our “dew”-good CEO at VMV Hypoallergenics and eldest daughter of VMV’s founding dermatologist-dermatopathologist. She has two children, Madison and Gavin, and works at VMV with her family and VMV’s signature “skinfatuated, skintellectual, skingenious” team. In addition to saving the world’s skin, Laura is passionate about health, cultural theory, human rights, happiness, and spreading goodness (like a VMV cream)!